Async Is Not Concurrency: A Hard‑Learned Lesson

Async Is Not Concurrency: A Hard-Learned Lesson

Short description

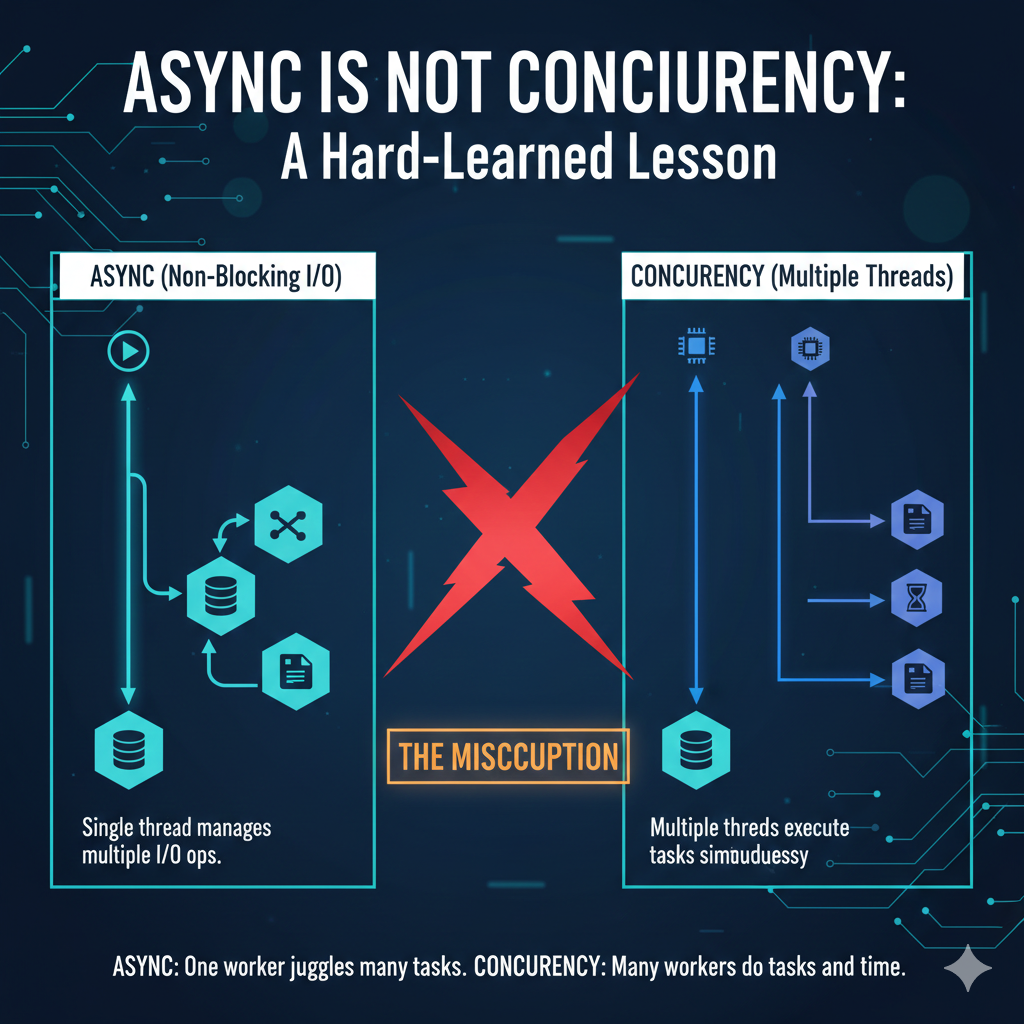

Async code helps systems avoid blocking while they wait. Concurrency determines how much work a system can actually perform at the same time.

Confusing the two often works fine in development—but production traffic has a way of exposing the difference very quickly.

The Assumption That Caused Trouble

Early in my backend career, I assumed that using async/await automatically meant my system was concurrent.

Requests were non-blocking. Response times looked healthy. Load tests passed.

It felt reasonable to assume the system would scale naturally.

What I hadn’t internalized yet was this:

Async improves how we wait. It does not increase how much work we can do.

Async made requests look fast

The code stayed clean and readable

No obvious bottlenecks appeared early

Under low traffic, this distinction barely matters. Under sustained load, it becomes critical.

What Async Actually Does

Async programming is fundamentally about efficiency while waiting.

When a request is blocked on I/O—database queries, network calls, disk access—async allows the runtime to do other work instead of idling.

In runtimes like Node.js, this usually means a single main thread coordinated by an event loop.

await db.query("SELECT * FROM users WHERE id = ?", [id]);

The thread initiates the I/O, yields control, and resumes execution when the result is available.

This model is extremely effective for:

Database queries

Network calls

Disk operations

The problem begins when we assume the same mechanism helps with CPU-bound work.

What Concurrency Really Means

Concurrency is about making progress on multiple units of work at the same time.

In practical systems, that usually requires parallel execution across multiple CPU cores.

This is not something async guarantees by default.

Concurrency is typically achieved through:

Multiple threads

Multiple processes

Isolated workers

The defining property is independence: one unit of work should not starve others of CPU time.

Async can exist without concurrency. Concurrency can exist without async.

Treating them as interchangeable leads to fragile system designs.

Where This Failed in Production

The failure surfaced after we shipped a feature that handled file uploads.

Each request involved parsing input, transforming data, and persisting results.

The implementation was fully async and looked clean from a code perspective.

Once traffic increased, CPU usage spiked to 100 percent.

Latency increased across the service

Unrelated endpoints slowed down

Throughput dropped sharply

The system was not blocked on I/O—it was blocked on computation.

The root cause was simple: CPU-bound work was running on the same event loop that handled incoming requests.

Why Async Failures Are Hard to Detect Early

Async systems often behave well during development and light testing.

They feel responsive and rarely show obvious warning signs.

Low traffic hides contention

Short tasks mask CPU pressure

Local testing rarely reflects production load

The failure mode typically appears only under sustained load, when CPU pressure turns small inefficiencies into system-wide slowdowns.

The Mental Model I Use Now

Today, I separate these concepts deliberately.

Async answers how efficiently a system waits.

Concurrency answers how much work the system can handle at once.

I/O-bound work → async works well

CPU-bound work → async alone is dangerous

This distinction now guides most of my architectural decisions.

How I Design Systems Today

I identify CPU-heavy work early in the design phase.

Tasks such as large JSON parsing, encryption, compression, or data transformation are treated as separate concerns.

Worker threads

Background jobs

Dedicated processing services

worker.postMessage({ payload });

Scaling is handled structurally—through multiple processes and load balancing—not by assuming async code will automatically use all available CPU cores.

Why This Lesson Applies Everywhere

This misunderstanding is not limited to Node.js.

It appears in:

Async Python

Java futures and reactive streams

Go routines

The tools differ, but the underlying mistake is the same: non-blocking code is mistaken for parallel execution.

Closing Thought

Async is a powerful technique, but it is not a scaling strategy by itself.

Concurrency is an architectural choice that must be made explicitly.

Once you internalize the difference, you stop relying on syntax for performance and start building systems that scale for the right reasons.